|

The following notations will be used throughout this article. In the second place, and more important, no one really knows what entropy really is, so in a debate you will always have the advantage.' In the first place your uncertainty function has been used in statistical mechanics under that name, so it already has a name. Von Neumann told me, 'You should call it entropy, for two reasons. I thought of calling it 'information,' but the word was overly used, so I decided to call it 'uncertainty.' When I discussed it with John von Neumann, he had a better idea. Shannon explained the name 'entropy' in (McIrvine and Tribus 1971): Several entropy measures are discussed in this article: von Neumann entropy, Rényi entropy, Tsallis entropy, Min entropy, Max entropy, and Unified entropy. Surprisingly, von Neumann entropy was introduced by von Neumann, (1932), almost 20 years before Shannon entropy was in (Shannon 1948). Von Neumann entropy is a natural generalization of the classical Shannon entropy. Other applications include quantum algorithms, quantum cryptography, or statistical physics as discussed in (Ohya and Watanabe 2010). Schumacher 1996) or quantum communication (Ohya and Volovich 2003), (Ozawa and Yuen 1993), (Holevo 1998).

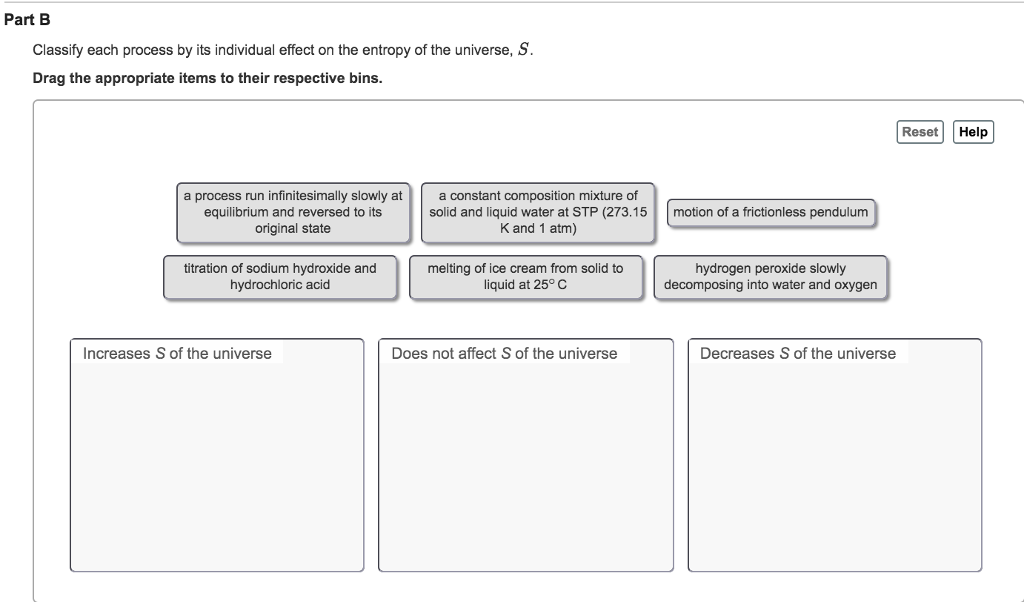

It is crucial in the theory of entanglement (e.g. In information theory, entropy is a measure of randomness or uncertainty in the system. image for different feature order selections: the decreasing rank order selection.

Anna Vershynina, University of Houston, Houston, TX, United States tures and requires almost all of them in contrast with the synthetic. Sarah S Chehade, University of Houston, Houston, Texas, US

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed